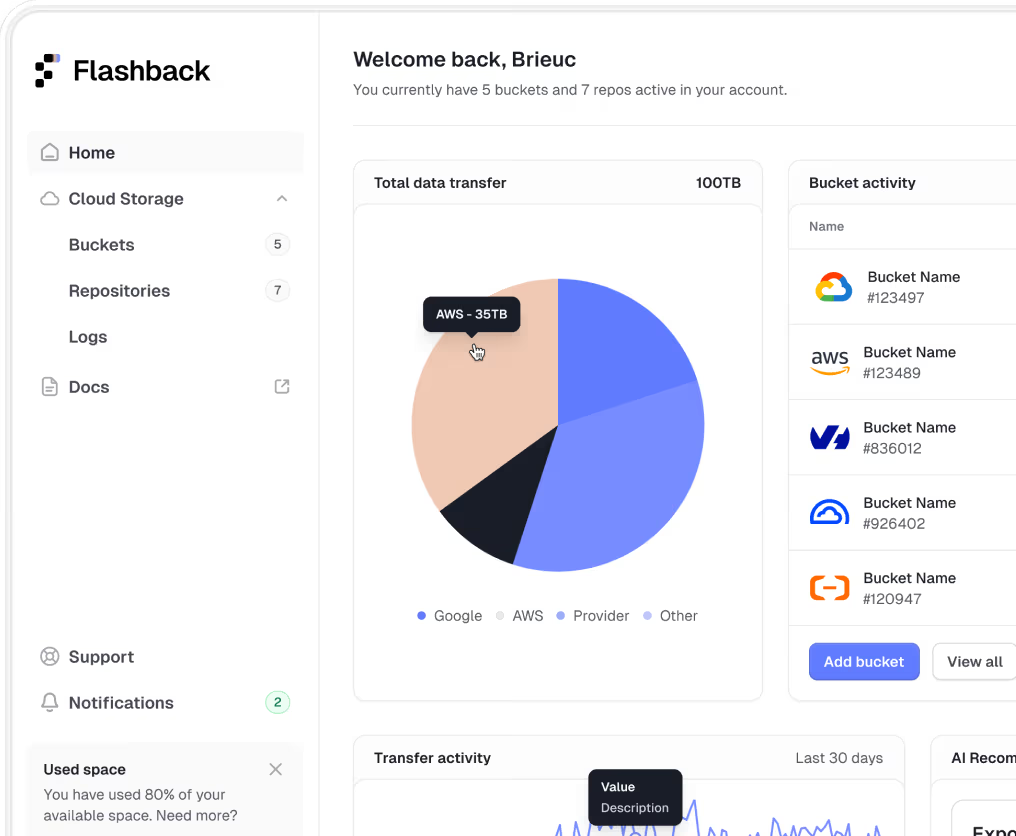

Flashback Enterprise AI Gateway

Secure Enterprise Gateway for AI Access and Governance

Secure AI access with full policy control and multi-provider flexibility for usage of ChatGPT, Google Gemini, and Claude.

Capabilities

Security, compliance, and flexibility

Flashback routes every AI request, whether from internal employees or application traffic, through a secure, policy-controlled gateway. You get complete oversight, vendor independence and full control over how models are accessed and how your data is protected.

Policy and Compliance Layer

Carry out robust policy enforcement and data anonymization.

Multi-Provider AI Routing

Switch and blend AI providers to avoid vendor lock-in and optimize for cost or performance.

Visibility and Governance

Monitor usage, costs, and policy compliance in real-time.