Security, Compliance, and Provider Independence

Control AI access without locking into one model vendor

Flashback sits between your apps (and employees) and model providers. Apply consistent policies, manage credentials centrally, and monitor usage across workspaces—while keeping flexibility to switch providers for cost, reliability, or performance.

PII & Policy Controls

Screen prompts and responses. Choose outcomes like log, alert, or block to match your governance requirements.

Multi-Provider Routing

Connect multiple providers and keep your application layer stable while you evolve model choices and routing rules.

Visibility & Governance

Track tokens, latency, errors, and policy events across teams and projects in real time.

Product

One governed layer for people and products

Route AI usage through a single control plane via Private Chat for employees or the Gateway for your applications.

Designed for security, IT, and enterprise enablement

Private Chat for enterprise rollout

Give employees a sanctioned way to use the best models while keeping access scoped, policies enforced, and usage visible across the organization.

Scoped usage by team and project

Align permissions to how your org is structured so users only access approved models and workspaces.

Guardrails on every interaction

Screen prompts and responses with configurable outcomes: log, alert, or block.

Central analytics and context workflows

Organization-wide adoption and cost visibility, plus stronger long-term context workflows for enterprise knowledge use cases.

Designed for developers and engineering teams

OpenAI-compatible AI gateway for production applications

Keep one API contract for your apps while you centralize routing, governance, and observability across providers.

OpenAI-compatible endpoint

Integrate quickly and keep a consistent interface as providers and routing evolve.

Scoped keys, centralized provider credentials

Use project-level keys for apps while provider credentials are managed centrally and encrypted at rest.

Provider portability + deep observability

Connect providers once, reuse across projects, and monitor requests, tokens, latency, and errors to manage cost and reliability.

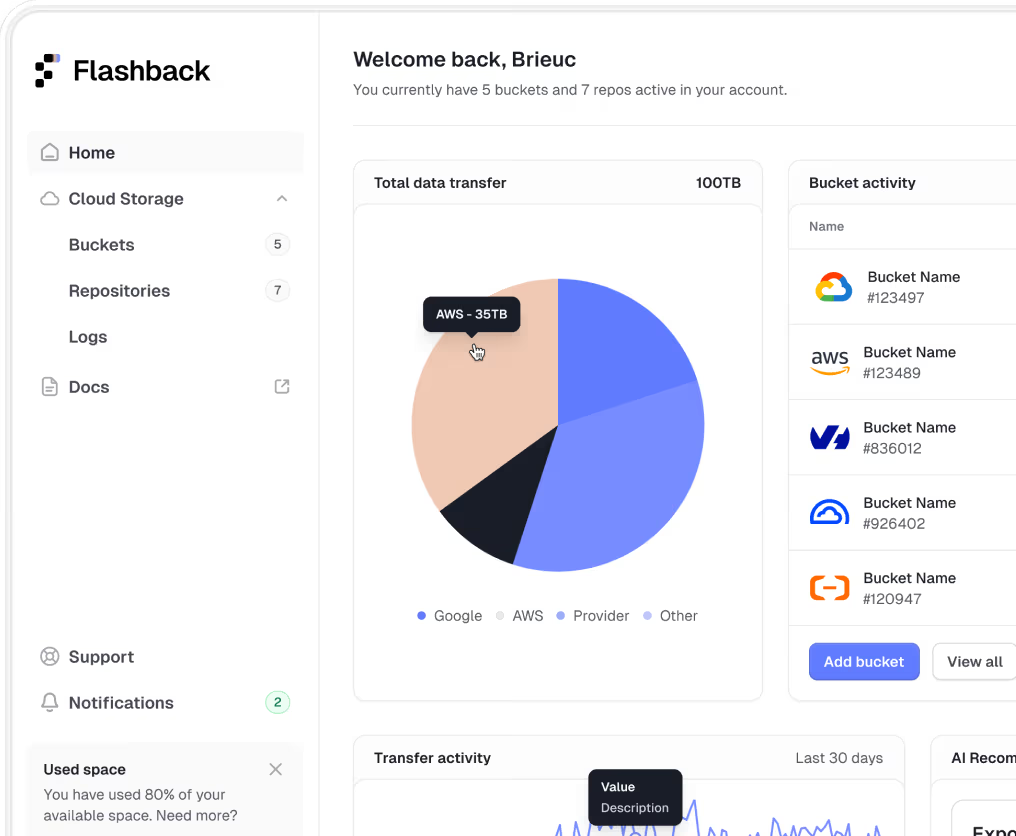

Management Dashboard

One console for policies, access, and visibility

Manage policies, workspaces, permissions, and connected model providers from one place. Monitor usage, cost signals, and policy outcomes across teams.

Manage policies at org, workspace, and project scope

Define and enforce governance rules at every level of your organization hierarchy.

Control users, roles, and permissions

Assign roles and manage access at the team and project level for fine-grained control.

Monitor usage and governance signals across teams

Track adoption, cost trends, and policy outcomes from a single operational view.

Frequently Asked Questions

No. Flashback does not replace model providers (OpenAI, Gemini, Claude, etc.). It provides a governed gateway layer to control how your organization accesses and uses those models.

Flashback supports leading commercial models and OpenAI-compatible endpoints, including self-hosted models.

Yes. Configure routing across multiple providers for resilience, experimentation, and operational control (e.g., fallback, cost-aware routing).

Data is routed according to your configuration. You control what is logged or stored (prompts, responses, metadata), and where. Flashback does not use your data to train models.

Yes. Flashback supports enterprise deployment models, including running inside your cloud environment to meet networking and data residency requirements.

Both. Capture operational telemetry such as request volume, errors, token usage, and latency, with dashboards and optional exports.

Many teams can integrate quickly thanks to an OpenAI-compatible API and minimal code changes. Production rollout follows your existing security and approval processes.

Trusted by teams building with AI